On 8-12 November 2021, several AIM researchers will participate at the 22nd International Society for Music Information Retrieval Conference (ISMIR 2021). ISMIR is the leading conference in the field of music informatics, and is currently the top-cited publication for Music & Musicology (source: Google Scholar).

The UKRI Centre for Doctoral Training in Artificial Intelligence and Music (AIM) will have a strong presence at ISMIR 2021, both in terms of numbers and overall impact.

In the Technical Programme, the following papers are authored/co-authored by AIM students:

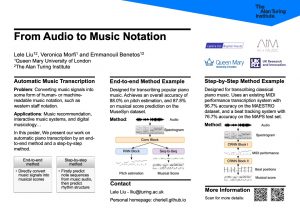

In the Late Breaking Demos track, the following extended abstract is authored/co-authored by AIM PhD student Lele Liu:

As part of the ISMIR 2021 Lab Showcases, the Centre for Digital Music and AIM programme will be featured at the session.

Finally on conference organisation, AIM PhD student Lele Liu is coordinating the ISMIR 2021 Lab Showcases; AIM PhD student Elona Shatri is chairing the ISMIR Newcomer Initiative & Diversity and Inclusion, and AIM PhD students Yixiao Zhang and Huan Zhang are volunteers for the conference.

See you at ISMIR!